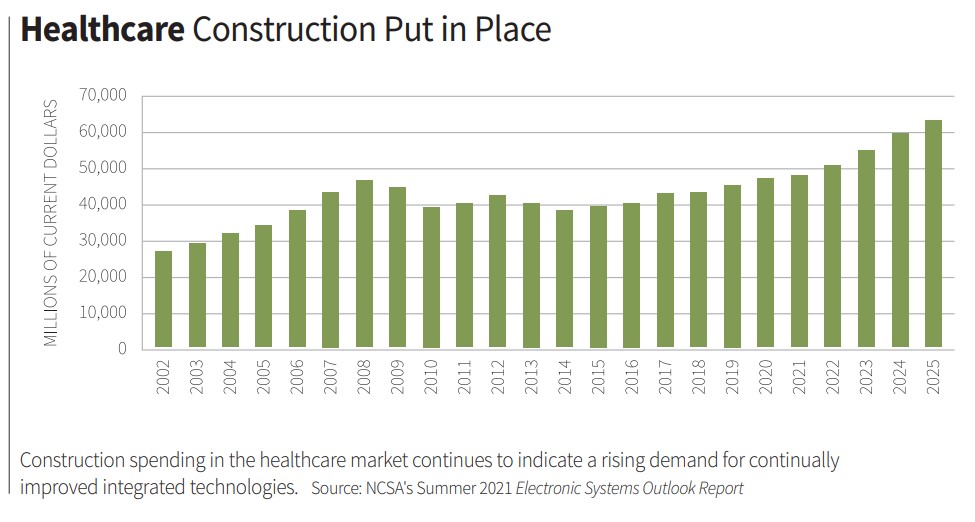

“11 Ways the Integration Business Will Change This Decade” is a wide-ranging analysis by NSCA board members of the future of integration, originally published in the special Pivot to Profit+ section of the Q3 2021 edition of our quarterly trade journal, Integrate. This column discusses healthcare technology advancements in the next decade.

The following healthcare technology advancements are on track to vastly change the acute care arena over the next 10 years.

Internet of Things (IoT)/Internet of Medical Things (IoMT)

We predict the push for integration to accelerate from systems that interconnect either directly or indirectly via APIs and third-party middleware not just to allow for better user experience from an operational perspective, but also to provide improved data aggregation capabilities for live, historical, and predictive insights. While vast amounts of data are collected via nurse call, electronic heath records (EHRs), vital sign tracking systems, notification systems, and real-time locating systems (RTLS) today, it’s rarely analyzed in a comprehensive manner. When it is, then analysts must manually merge data sets from independent systems, which is extremely time-consuming.

We will experience a push to standardize reporting across these systems, likely deploying artificial intelligence for the heavy lifting. As this happens, we will see increased integration, leading to additional interconnected devices. The data produced today is at the level of basic analytics, leading to advanced analytics in the short-term and ultimately predictive analytics, which will look and feel like artificial intelligence and machine learning in the long-term. Nurse call, RTLS, ancillary notification systems, and smart rooms will contribute, but we believe that EHRs will be richest of all. At some point, the data will be sanitized in a manner in which patient-specific information no longer exists, which will allow for sharing and processing. This will lead to quicker diagnoses, better patient outcomes, and an improvement to the health profession.

Integration will also be seen in IoT/IoMT, resulting in the proliferation of smart patient rooms. Everything in the room will be connected and will contribute to an improved experience for patients and caregivers. Caregiver workflows will evolve, allowing them to focus on the patient while rooms will “learn” patient preferences and adapt over time. This will likely include room temperature, room brightness, favorite TV channels at certain times, and nurse calling patterns (water, pain, toilet). It will incorporate voice control. Artificial intelligence (AI) will preemptively sense when the patient is attempting to get out of bed, automatically turn the lights on if dark, and notify the nursing staff, which mitigates patient falls. Additionally, AI will be used to predict the onset of post-surgical complications. Perhaps most importantly, AI will allow nurses to document unverified data in the EHR while in the room for confirmation when convenient, ultimately resulting in fully automated documentation.

Currently, hospital systems desire to keep all data, systems, and servers completely within their firewalls to avoid breaches of patient data. This will be a barrier to overcome—and we believe it will be in the long-term, possibly with the adoption of 5G. When the additional bandwidth of 5G is utilized to enhance security to the point that traditional wired networks are no longer necessary to reduce risk and are abandoned, we imagine that all devices we install today will have 5G chipsets incorporated for communications for standard operation and offsite data collection and monitoring. Couple that with predictive device failure, device stress monitoring, and automated service call generation, and we will have maximized IoT/IoMT in the acute care arena within healthcare from the nurse call industry’s perspective.

Augmented Reality (AR)

We see AR as the future of caregiving and system service/support delivery. Technicians require a unique combination of skills in IT and analog. We predict the analog skillset to become rarer as we continue to trend toward IT-centric skills. The dichotomy of these two needs makes it increasingly difficult to find staff who meet both requirements. Utilizing AR, technicians can be brought aboard who are not proficient throughout the required technical continuum and will allow the gaps to be quickly filled.

A team of experts can be located at the office and accessed by technicians wearing AR-enabled glasses. The centralized team can provide guidance to less experienced technicians, reducing operational costs with less travel, exposure of risk, and perhaps a promotion path for senior technicians who cannot perform the physical labor any longer. The centralized knowledge base will also help with system issue resolution in service and support scenarios.

Building information modeling (BIM) will provide benefits in conjunction with AR; technicians can “see” an overlay of the infrastructure while they’re walking down the hallway and know where to pull the cable, which conduit is theirs, pull in project management immediately when necessary, and call meetings with other trades for decision-making while they’re standing at the location where the issue exists. This will shorten answer time, push projects forward, and allow continuous training while minimizing costs.

While there is a high upside regarding AR for system implementation and service delivery, we predict the largest impact will be the caregiver experience with the nurse call system. Initially, AR will function as an additional integration layer on top of the interconnected devices and system in use today (nurse call, wireless phones, RTLS, EHR, etc.). Ultimately, AR could replace many aspects of the integrated nurse call ecosystem, leading to healthcare technology advancements that lead to nurse call as we know it ceasing to exist.

A notification initiated today without AR includes wireless annunciation, audible annunciation, and visual annunciation via flashing lights in the hallways.

With AR, staff on duty will receive a visual message of the patient’s name and other information, call priority type, and room directionality/wayfinding. Room direction will be determined either in conjunction with RTLS or may render RTLS unnecessary as location information may be contained within the AR system. The caregiver will be able to open a communication path to the patient, rendering legacy integration to wireless phones and pagers unnecessary.

High-urgency calls, such as code blue and staff assist, will have more aggressive tones and flashing or different visual indications. Depending on space on the AR device display and effectiveness, the patient’s EHR documentation and medical history will be displayed to the caregiver when the notification is received or, if the call is received while working with another patient, when accepted by the caregiver. If never accepted, it will be shown upon entry into the room. These improvements will allow caregivers to provide better care and lead to better patient outcomes.

This is a forward-thinking document about healthcare technology advancements from the group led by NSCA Board Member Ray Bailey, CEO at Lone Star. This was written by Justin Bailey, Cliff Switzer, Brian Banks, and Jeff Richard.